You’ve read about GEO. You’ve run a few searches. You may have even briefed your team. And yet one of these five beliefs is almost certainly still shaping your strategy—and limiting your results.

Reading time:10–12 minutes | Level: Beginner to Intermediate

What You’ll Learn in This Guide

By the end of this article, you will understand:

- Why strong SEO does not automatically translate into AI visibility—and what the data shows

- Why your own website is the weakest signal AI has, not the strongest

- Why publishing more content without structural changes produces no GEO results

- Why a single strategy across ChatGPT, Perplexity, and Google AI is leaving significant citation opportunity unrealised

- Why GEO is not a one-time project—and what a sustainable visibility strategy actually requires

Table of Contents

1. Why These Beliefs Are So Costly

Here is what makes these five beliefs particularly damaging: none of them are unreasonable.

Each one is a logical inference from how marketing used to work. Each one is held by founders who are paying attention, not the ones who have ignored AI search entirely. And each one produces real, measurable harm—not because it’s obviously wrong, but because it’s just plausible enough to delay action for months.

According to McKinsey, only 16% of brands currently track their performance in AI search. The other 84% have no idea whether they are visible, invisible, or actively being misrepresented in the AI-generated answers their potential customers are reading every day.

The five beliefs below explain how brands end up in that 84%.

💡 Key Insight

Between 40% and 60% of cited sources in AI-generated answers change month to month. Brands that are visible today are not guaranteed to be visible next month. The beliefs that delay investment in GEO are not just costing brands current visibility—they are costing them the compounding advantage that early movers are building right now.

2. The 5 Beliefs—And What the Data Actually Shows

Belief 1—”My SEO Is Strong, So My AI Visibility Should Be Fine.”

Why founders believe it: SEO and GEO feel like variations of the same discipline. Both involve search. Both involve content. Both involve being found online. It follows logically that strong SEO would provide some degree of AI visibility.

Why it’s wrong: The overlap between brands that rank in Google’s top 10 and brands cited in AI-generated answers has dropped to below 20%, according to Brandlight research. A brand can be on page one of Google for its most important keywords and be completely absent from every AI recommendation its potential customers read.

The mechanisms are fundamentally different.

SEO earns you a position in a ranked list. Google evaluates your page for keyword relevance, backlinks, technical quality, and on-page signals—and places you somewhere in a set of results.

GEO earns you a citation in a generated answer. AI engines evaluate your brand for entity consistency, third-party editorial trust, and content extractability—and either include you in a synthesised recommendation or ignore you entirely. These are not variations of the same game.

The data that makes this concrete: Research shows that 80% of LLM citations don’t even rank in Google’s top 100 for the same query. The brands AI recommends are not, in the main, the brands Google ranks highest. They are the brands with the clearest, most consistent citation patterns across the third-party sources AI engines already trust.

The signal that tells you this belief is costing you: Your content ranks well in Google but rarely appears in AI-generated answers. When it does appear, it’s cited for peripheral points rather than your core value proposition.

📌 Key Takeaway

Think of SEO as the foundation and GEO as a separate layer built on top of it. Strong SEO is necessary—it provides the technical infrastructure and domain authority that GEO builds from. But doing SEO well does not do GEO for you. Both require deliberate, distinct investment. For a full breakdown of how these relate to each other, Traditional SEO vs AEO vs GEO vs AI SEO is worth a read.

Belief 2—”AI Learns From My Website, So If My Website Is Good, I’ll Be Cited.”

Why founders believe it: Your website is the most complete, most accurate source of information about your business. It’s natural to assume AI draws heavily from it when generating answers about your brand.

Why it’s wrong: AI engines—ChatGPT in particular—heavily suppress brand-owned content. Your website is the weakest signal AI has access to, not the strongest. AI is specifically looking for what independent third parties say about you, not what you say about yourself.

The most cited sources in ChatGPT responses are high-authority editorial publications, independent review platforms, and established community forums. A well-written, well-optimised website with no third-party editorial coverage will rarely be cited—however accurate or detailed its content is.

There is also a technical issue most founders miss entirely.

Many brands are unknowingly blocking AI crawlers through their robots.txt file. Cloudflare changed its default configuration in 2024 to automatically block AI bots—meaning brands using Cloudflare and who haven’t reviewed their settings may have been completely invisible to ChatGPT for over a year. Not ranking low. Not being deprioritised. Completely invisible.

You can verify this in under two minutes. Go to your website URL and add /robots.txt at the end. Look for GPTBot listed under a Disallow rule. If it is there—OpenAI’s crawler cannot read your website at all. This needs to be fixed before anything else, because no other GEO investment produces results until AI can physically access your content.

The data that makes this concrete: As per Stacker, Distributing content to a range of third-party publications can increase AI citations by up to 325% compared to publishing only on your own site. That is not a marginal difference. It is the difference between appearing in AI answers and not appearing at all.

The signal that tells you this belief is costing you: You have strong owned content but competitors with weaker websites appear ahead of you in AI answers—because they have coverage in publications, forums, and community platforms that AI engines trust.

⚡ Quick Win

Check your robots.txt file today. Then open ChatGPT and type “What is <your business name>?” Read the description carefully. If it is vague, inaccurate, or uses hedging language—”reportedly,” “claims to,” “may offer”—your entity signals are inconsistent and AI lacks the confidence to recommend you. Both are fixable. Neither will fix itself.

Belief 3 — “I Just Need More Content—Quantity Will Eventually Get Me Cited.”

Why founders believe it: Content volume has always been a lever in traditional SEO. More pages, more keywords, more surface area for Google to index. More chances to rank. The same logic applied to AI visibility feels reasonable.

Why it’s wrong: AI does not reward volume. It rewards extractability.

A single page that opens with a direct, specific, verifiable answer to a question your customers ask AI—backed by third-party authority—is worth more for GEO than fifty pages of general content that buries the answer in the fourth paragraph.

The data is precise on this: 44.2% of all LLM citations come from the first 30% of a piece of content—the introduction—according to Growth Memo research, February 2026. If your content does not answer the question directly in the opening, AI may not cite it at all, regardless of how thorough the rest of the piece is.

More content without structural change is more of what is not working.

The test for every piece of content is one question: can AI extract a specific, confident, verifiable claim from this page in under 30 seconds? Not a brand statement. Not a mission. A specific, factual claim that AI can pull, cite, and repeat in a generated answer. If the answer is no, the problem is not volume. The problem is structure.

The data that makes this concrete: AI Mode has extremely high volatility—when performing three tests for the same query, results overlapped with themselves just 9.2% of the time according to SE Ranking research, August 2025. Volume alone cannot compensate for structural weakness. Consistently structured content is what appears reliably despite this volatility—not the most content.

The signal that tells you this belief is costing you: You have published consistently but citation frequency has not improved. AI references your brand occasionally, but not for the queries that matter most to your business.

📌 Key Takeaway

Audit your existing content before creating anything new. For each key page, run the 30-second test. If a page fails—restructure it. Lead with the answer. Follow with the evidence. Close with the context. At OddScrew, we call these Answer Assets: content designed to be cited, not just read. Ten well-structured Answer Assets will consistently outperform fifty pages of general content in AI search.

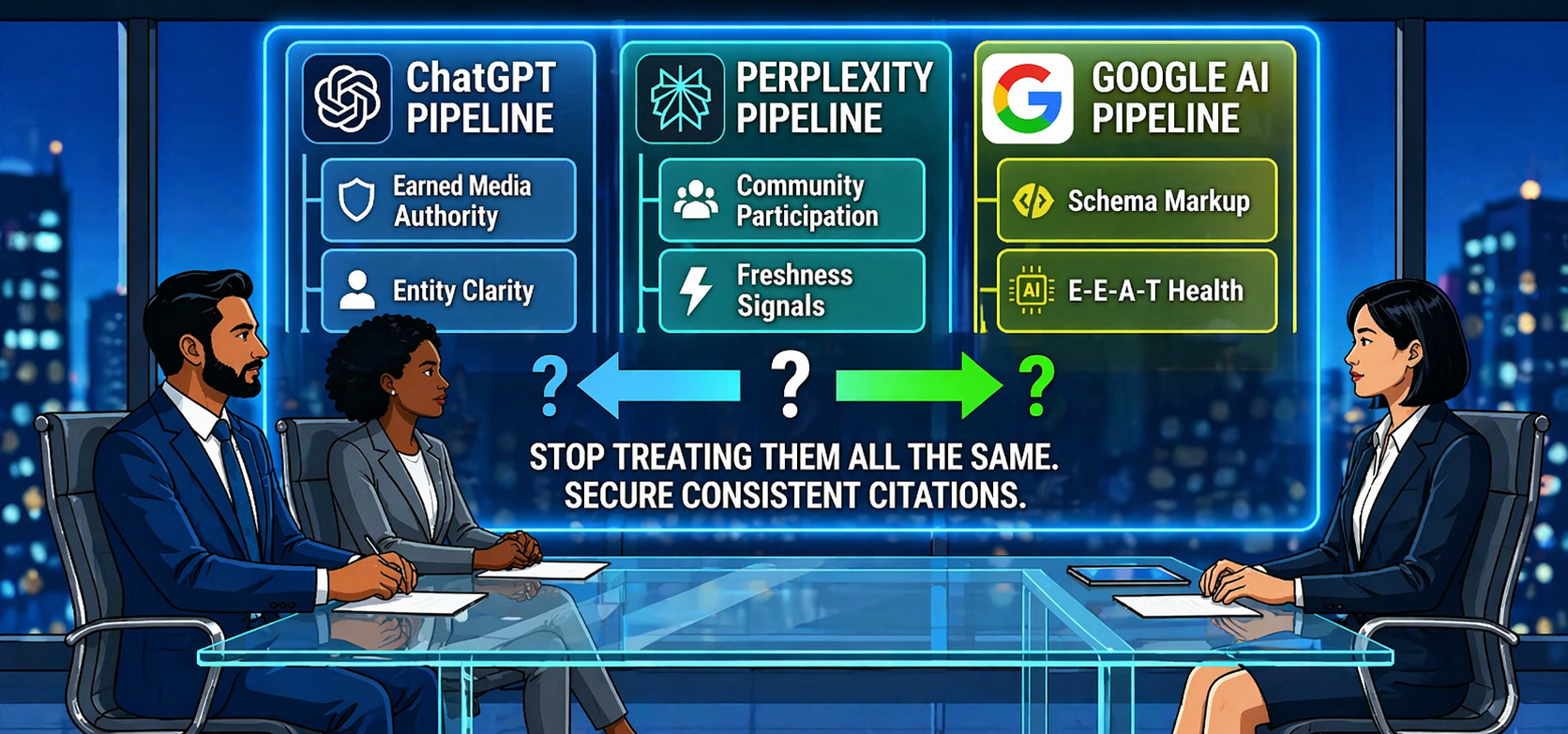

Belief 4 — “ChatGPT, Perplexity, and Google AI All Work the Same Way—One Strategy Covers All Three.”

Why founders believe it: They are all AI search platforms. They all generate answers. They all cite sources. Treating them as one channel is the operationally simpler approach—and it seems logical that what works on one would transfer to the others.

Why it’s wrong: Only 11% of domains are cited by both ChatGPT and Perplexity, according to an analysis of 680 million citations by Avery AI. A separate 2026 study found a 46x difference in citation rates for the same brand across the same queries on different platforms.

These are not variations of the same system. They are separate ecosystems with different retrieval mechanisms, different source preferences, and different content signals—and a strategy built entirely for one can actively fail on another.

ChatGPT has the largest user base and the highest bar for citation. It suppresses brand-owned content more aggressively than any other platform and draws heavily from training data—prioritising high-authority editorial sources. Earned media and third-party authority are non-negotiable here.

Perplexity retrieves and cites live web content more transparently. It rewards recency more strongly than ChatGPT—fresh, well-structured content can gain traction here faster than on any other platform. It is also more likely to cite niche publications and community sources that ChatGPT might overlook.

Google AI Overviews integrate deeply with Google’s existing authority signals. Domain authority, backlinks, structured data markup, and E-E-A-T signals carry significantly more weight here than on the other two platforms. For brands with strong traditional SEO foundations, Google AI Overviews are typically the most accessible starting point for AI visibility work.

The data that makes this concrete: There is less than a 1 in 100 chance that ChatGPT or Google AI, asked the same question 100 times, will produce the same list of brands in any two responses—SparkToro, January 2026. The citation landscape is volatile on every platform. Each one is volatile in different ways.

The signal that tells you this belief is costing you: You appear on one AI platform but not others. Or you have optimised for Google AI Overviews—where SEO foundations transfer most directly—but have done nothing specific for ChatGPT, where the majority of high-intent AI search actually happens.

📌 Key Takeaway

Build a universal foundation first: direct answer structure, consistent entity signals, schema markup, factual specificity with named sources. These signals work across all three platforms. Then layer platform-specific tactics on top—earned media and high authority editorials for ChatGPT, fresh structured content for Perplexity, domain authority and technical signals for Google AI Overviews.

Belief 5—”GEO Is a One-Time Fix—Once I’m Cited, I’m Set.”

Why founders believe it: Traditional SEO rankings, once earned through consistent effort, tend to be relatively stable. A page that ranks well today will often still rank reasonably well in three months. It is understandable—and incorrect—to assume AI citations work the same way.

Why it’s wrong: Between 40% and 60% of the sources AI cites change month to month, according to AirOps research. AI citation is not a stable ranking. It is a dynamic, competitive landscape that shifts constantly as new content is published, new editorial sources gain authority, competitors invest in their own GEO, and AI models update their retrieval behaviour.

A brand that earns consistent citation today and stops investing can lose that position within weeks. There is no notification when this happens. No ranking drop alert. No dashboard that tells you a competitor has displaced you. The loss is silent—and by the time you notice a drop in leads or enquiries, the gap may have been widening for months.

There is also a compounding dynamic that works in reverse. Brands that maintain consistent citation authority build an advantage that becomes harder for competitors to break into over time. Brands that treat GEO as a project with a finish line find themselves facing a widening gap that grows more expensive to close the longer they wait.

The data that makes this concrete: Only 30% of brands that appear in an AI-generated answer show up again in the very next response to the same query, according to the 2026 State of AI Search report from AirOps. Run the same query five times in a row, and just 20% of brands persist across all five responses. The brands in that 20% are not there by chance. They have built citation authority deep and consistent enough to appear reliably despite the volatility.

The signal that tells you this belief is costing you: You appeared in AI answers some months ago but citation frequency has dropped. You are not sure when it happened or why—because you have no monitoring system in place to catch it.

⚡ Quick Win

Build a monthly GEO monitoring routine—non-negotiable, not optional. Every month: run your 10 to 15 most important category queries across ChatGPT, Perplexity, and Google AI Overviews. Document which brands appear. Track your Share of Voice—the percentage of queries where your brand appears. When that number drops, you have a short window to respond before a competitor consolidates the position. If you haven’t run your first audit yet, start here.

3. Frequently Asked Questions

Does good SEO hurt my GEO strategy? No—strong SEO is the foundation GEO builds from. The point is not that SEO is wrong. It is that SEO alone is no longer sufficient. Both disciplines are needed, and they require separate, deliberate investment.

How quickly can my brand start appearing in AI-generated answers after addressing these issues? Technical fixes—unblocking AI crawlers, resolving entity consistency—can produce measurable improvement within 4 to 6 weeks. Content restructuring typically takes 10 to 14 weeks to show consistent citation improvement. Earned media is a 3 to 6 month programme, but it produces the most durable and compounding results.

If I only fix one thing, which of these five beliefs should I address first? Start with Belief 2—specifically, the technical access check. If AI crawlers cannot read your website, no other investment in GEO will produce results. Verify your robots.txt and Cloudflare settings before anything else. Once access is confirmed, move to entity consistency, then content structure, then earned media.

How do I know if AI is describing my brand inaccurately? Open ChatGPT and type “What is [your business name]?” Read the response carefully. Look for hedging language—”reportedly,” “claims to,” “may offer”—which signals that AI does not have enough consistent signals to describe you with confidence. Then check the factual accuracy of the description against your current positioning, services, and messaging.

What is Share of Voice in the context of GEO, and how is it measured? Share of Voice is the percentage of your tracked queries—the questions your potential customers ask AI about your category—where your brand appears in the generated answer. It is the most reliable GEO performance metric currently available. It is measured manually each month by running your core query set across ChatGPT, Perplexity, and Google AI Overviews and recording which brands appear in each response.

Final Thought: The Belief That’s Holding You Back Is the One That Feels Most Reasonable

Every myth on this list shares the same root: the assumption that AI search works the way traditional search used to work.

It does not. The rules changed. The signals changed. The platforms changed. And the brands that understand this—not in theory, but in practice, in their content structure, in their earned media pipeline, in their monthly monitoring routine—are the ones compounding an advantage right now that late movers will find genuinely difficult to close.

None of these beliefs make you wrong for having held them. They reflect the logic of a previous era—one that still applies in part, but no longer in full.

The question is what you do now that you know the difference.

🚀 Ready to Find Out Which of These Beliefs Has Been Shaping Your Strategy?

A GEO visibility audit is the fastest way to identify exactly where your brand stands—and which of these five issues is most urgent to address. At OddScrew, we map your complete Citation Gap in 48 hours: which queries you’re missing from, which competitors are being recommended instead, and exactly what it will take to close the gap. It is complimentary. And it starts with a single conversation.