What happened when we tested how three major AI platforms recommend skincare brands—and what it reveals about the signals that matter most

Reading time: 13–15 minutes | Level: Beginner to Intermediate

Case Study At-a-Glance

| Industry | Consumer Skincare (D2C) |

| Platforms Tested | ChatGPT, Perplexity, Google Gemini |

| Query Type | High-intent purchase decision |

| Date Conducted | May 2026 |

| Key Finding | AI recommends brands with strong entity consistency, third-party corroboration, extractable content, and aligned community sentiment |

| Business Implication | Confident vs cautious AI language shapes purchase intent before customers reach your site |

Table of Contents

Executive Summary

We asked three AI platforms a simple question: “Which skincare brand should I start with as a beginner in India?”

The platforms recommended Minimalist, Cetaphil, Simple, and Dot & Key. One of India’s most recognized skincare brands didn’t appear.

When we asked about the absent brand directly, all three platforms responded with qualified, cautious language.

This wasn’t a fluke. It was a pattern that reveals how AI platforms evaluate brands—and why traditional brand awareness doesn’t automatically translate to AI recommendations.

This case study documents the experiment, identifies the four specific signals that determined these recommendations, and explains what this means for any business competing in an AI-first discovery environment.

The core insight: AI visibility is built on different foundations than brand awareness. The signals that earn confident AI recommendations—entity consistency, third-party corroboration, content extractability, and community sentiment alignment—are accessible to brands at any scale. But they require fundamentally different strategies than traditional visibility building.

The Visibility Paradox

High brand recognition, it turns out, doesn’t guarantee AI visibility—and this case study shows exactly why.

Despite high brand awareness and widespread market presence, when potential customers ask AI platforms for recommendations, the brand is either absent or recommended with hedging language that undermines purchase intent.

Meanwhile, a science-focused competitor appears consistently across ChatGPT, Google Gemini, and Perplexity—recommended confidently, explained clearly, cited reliably.

The question this raises: If brand awareness doesn’t determine AI visibility, what does?

The opportunity: The signals AI platforms prioritize are observable, measurable, and buildable. Brands that understand these signals can establish citation authority before competitors recognize the shift. The window for early-mover advantage is closing.

The New Rules of AI Recommendations

The way people discover products has fundamentally changed. When someone asks an AI platform for skincare recommendations, they’re not seeing a list of search results to evaluate—they’re getting 3-5 specific recommendations with reasoning already attached.

This shifts the entire game. Traditional visibility metrics measure exposure: ad impressions, influencer reach, website traffic. But AI platforms don’t recommend based on exposure. They recommend based on information they can verify, extract, and cite with confidence.

Four specific signals determine whether AI platforms recommend a brand confidently or hedge with cautious language:

- Community Sentiment as Citation Source — Do discussions in trusted communities align with brand positioning? AI platforms weight Reddit, specialized forums, and authentic user discussions heavily when forming recommendations.

- Entity Consistency Across Sources — Does Wikipedia describe your brand the same way Amazon does? Does your own site match how review platforms characterize you? Inconsistent descriptions signal uncertainty.

- Content Extractability — Can AI platforms parse your product information into citable facts? “Niacinamide 10%” is extractable. “Miracle glow serum” is marketing copy that requires interpretation.

- Third-Party Corroboration — Do independent sources validate your claims? Dermatologist recommendations, peer-reviewed mentions, and editorial coverage create corroboration that AI systems can cross-reference.

When these four signals align, AI recommends with confidence. When they conflict or remain weak, AI hedges with qualified, cautious language.

The business stakes: The language AI uses to describe your brand—confident versus cautious—shapes purchase intent before customers ever reach your site.

This case study examines how these signals played out for two Indian skincare brands with vastly different marketing approaches.

The Experiment

Methodology

We ran one standardized query across three AI platforms:

Which skincare brand should I start with as a beginner in India?

The query was deliberately chosen because it is:

- High-intent — represents actual purchase behavior

- Commercially relevant — the kind of question that drives revenue

- Representative — how real customers make decisions

Testing protocol:

- All queries were run in private/incognito browsing mode to eliminate personalization bias

- No prior search history or logged-in accounts

- Queries conducted within the same 24-hour period to ensure consistency

- Screenshots captured immediately to document responses

We documented:

- Every brand mentioned across all three platforms

- The language AI used to describe each brand

- Which brands were absent

- What happened when we asked about absent brands directly

What the AI Platforms Returned

Unprompted Recommendations

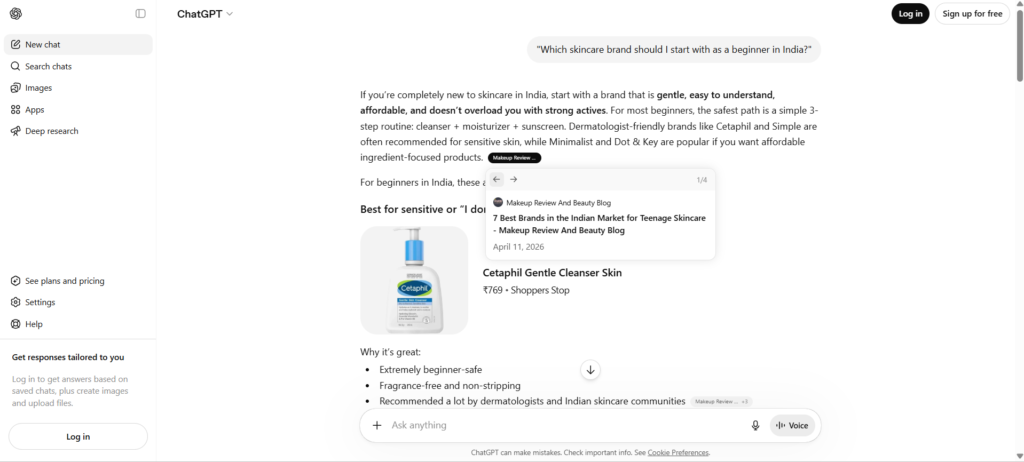

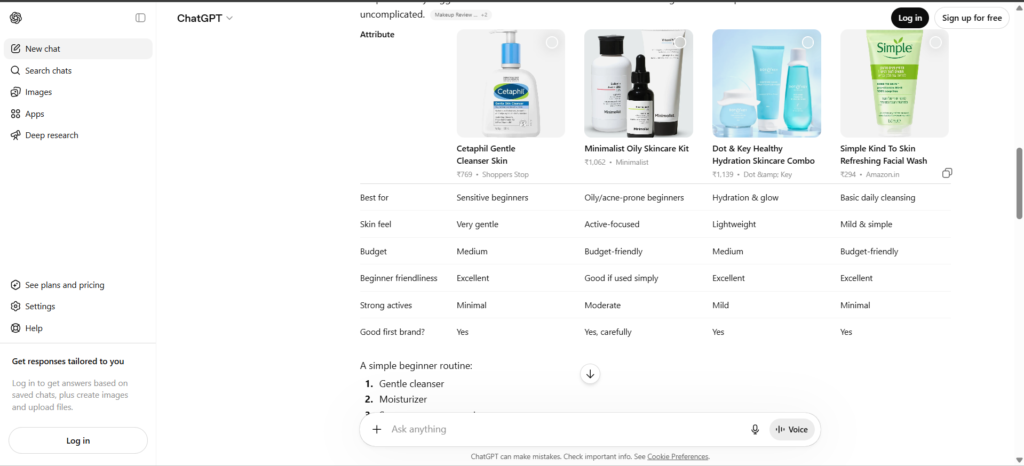

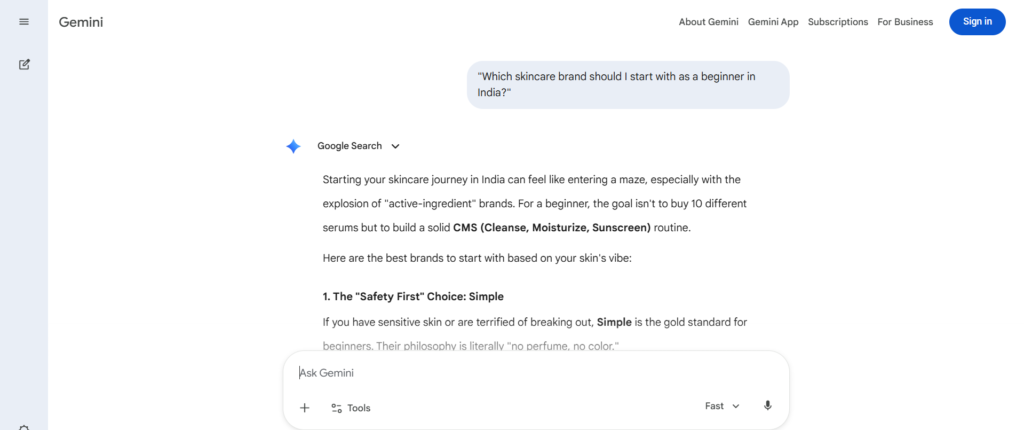

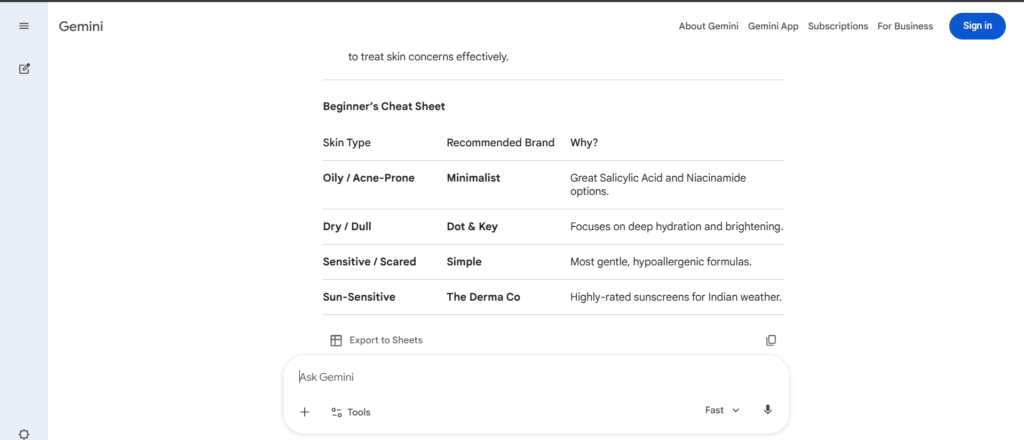

Across all three platforms, we observed consistent patterns in which brands appeared and how they were described:

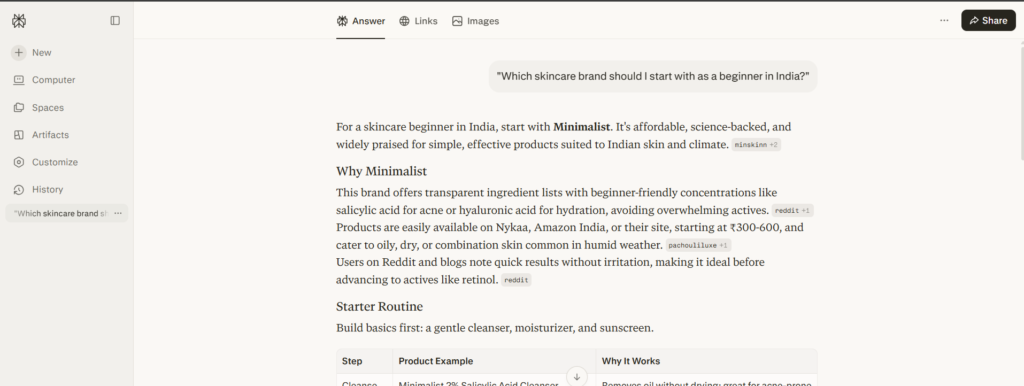

Minimalist appeared on every platform — typically as the first or second recommendation. AI described it as “science-backed,” “ingredient-transparent,” and ideal for beginners due to clear active ingredient formulations.

Plum, Dot & Key, and Simple Skincare appeared across multiple platforms, each with specific product attributes: gentle formulations, hydration focus, suitable for Indian skin types.

The recommendations included:

- Specific reasoning for each brand

- Citations from dermatology publications and community platforms

- Suggested specific products within each brand’s range

ChatGPT’s Initial Response

Google Gemini’s Initial Response

Perplexity’s Initial Response

What We Observed About Absent Brands

One of India’s most recognized skincare brands—Mamaearth, publicly listed under Honasa Consumer Ltd.—did not appear in the unprompted recommendations from any of the three platforms.

Important context: This observation is not a commentary on product quality. We’re examining AI recommendation patterns to understand the signals that AI platforms prioritize. Mamaearth has built a successful business serving millions of customers. Our focus here is on understanding how AI makes recommendations—not on evaluating the brand’s products or business model.

What Happens When AI Is Asked Directly

To understand how AI platforms view brands that don’t appear in initial recommendations, we asked each platform directly:

What about Mamaearth?

What the AI Platforms Said

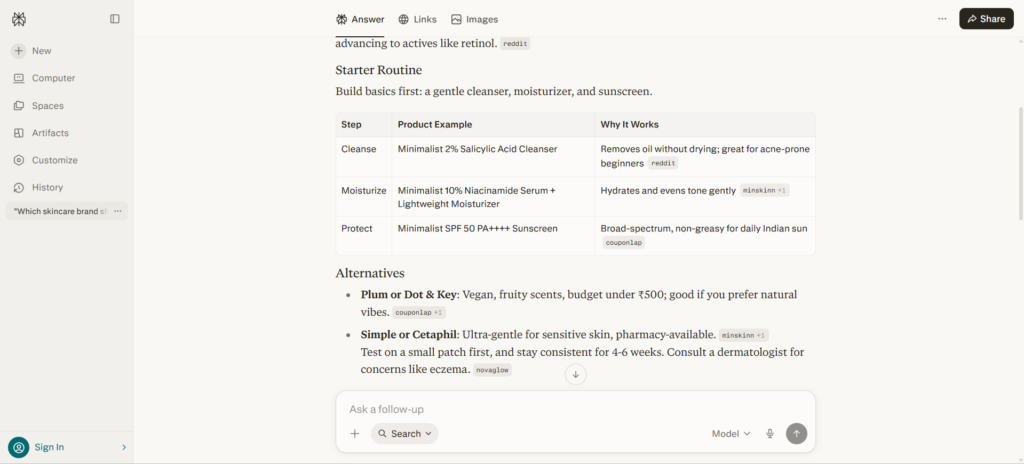

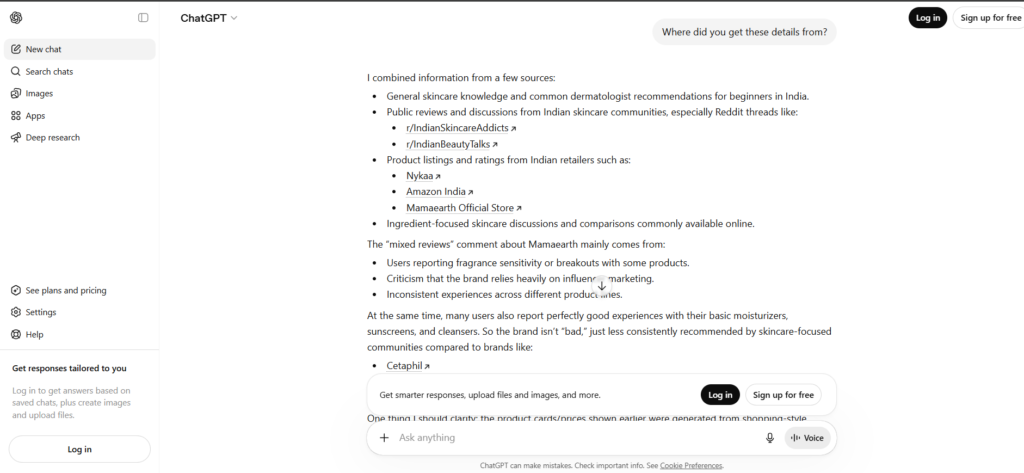

1. ChatGPT

ChatGPT’s response included:

- Qualified language: “okay for some people, but I usually don’t recommend it as the very first skincare brand for beginners”

- Specific caveats: “especially if you have sensitive or acne-prone skin”

- Citations from Reddit threads in Indian skincare communities

- References to mixed user experiences

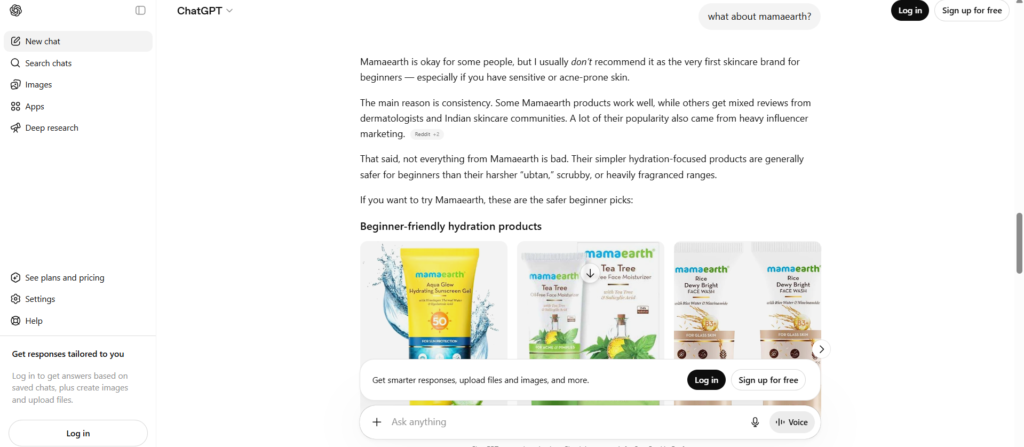

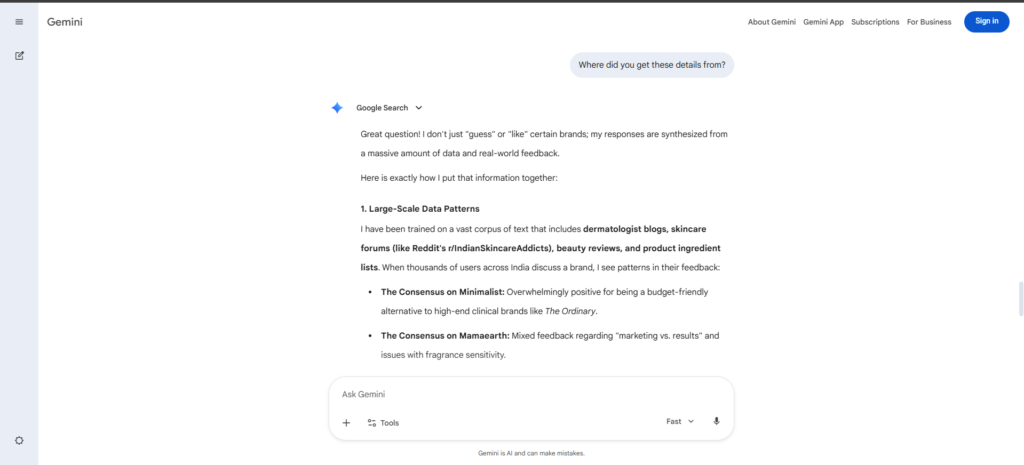

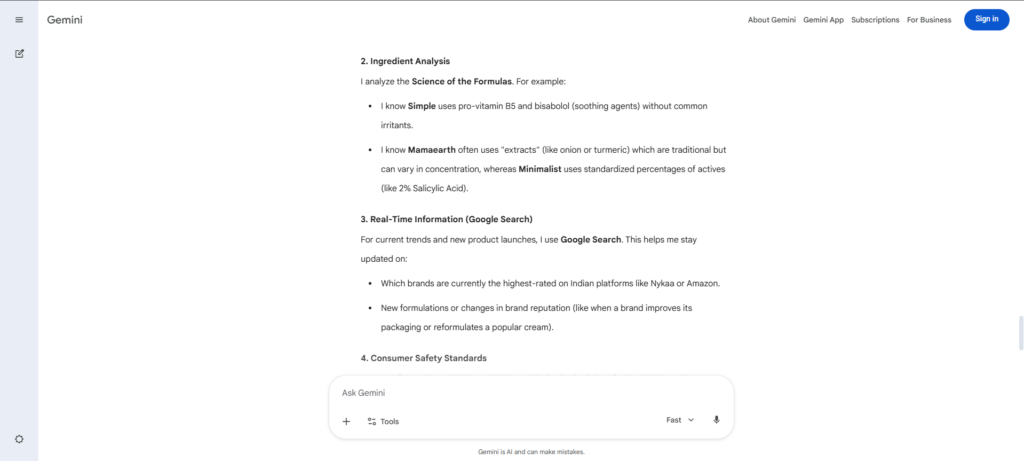

2. Google Gemini

Gemini’s response noted:

- Described the brand as “a bit of a polarising brand in the skincare community”

- Observed that “natural” and “toxin-free” marketing claims aren’t universally corroborated by independent sources

- Suggested that other brands may be more suitable for sensitive skin

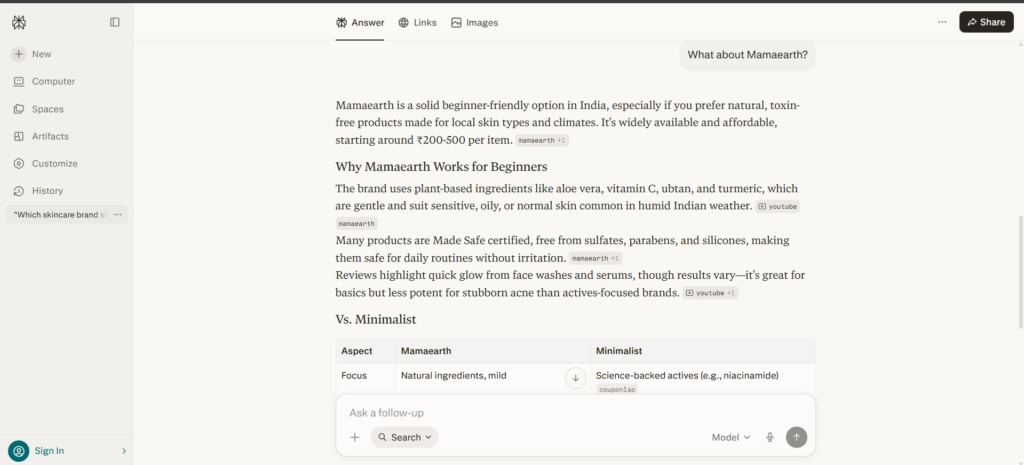

3. Perplexity

Perplexity’s response:

- Acknowledged Mamaearth as a valid option in some product ranges

- Ranked it below other brands for beginners

- Provided source citations supporting the positioning

The Pattern We Observed

When asked directly, AI platforms provided information about Mamaearth—but the language was consistently more qualified and cautious compared to their unprompted recommendations.

What this reveals: In AI search, how a brand is described matters as much as whether it appears. Qualified recommendations create a different impression than confident, specific recommendations. This isn’t about whether a brand is “good” or “bad”—it’s about understanding which signals AI platforms use to determine recommendation confidence.

The Anatomy of Consistent AI Recommendations

Brands like Minimalist’s visibility advantage wasn’t the result of deliberate AI optimization. The brand’s product development philosophy—transparent ingredient lists, standardized percentages, scientific naming——naturally created the signals AI platforms prioritize.

1. Authentic positioning creates AI-readable content

The brand’s commitment to “no hidden ingredients, no ambiguous claims” meant every product page was structured as a fact sheet. When AI platforms parsed Minimalist’s content, they found extractable data points: specific percentages, clearly named actives, measurable claims. There was no translation layer needed—the content was already in the format AI systems prefer.

2. Community alignment by design

Because Minimalist marketed itself as “science-focused,” the people who discussed it on Reddit were already aligned with that positioning. There was no gap between brand narrative and community perception—the brand attracted exactly the audience most likely to validate its positioning in forums AI platforms read.

3. The compound effect

Once AI platforms began citing Minimalist consistently, this created a reinforcing cycle:

More AI visibility → more customers discovering via AI → more genuine reviews from that audience → stronger community validation → even more consistent AI citations → higher recommendation confidence

Key Insight: The brands that will dominate AI recommendations aren’t necessarily those with the highest brand awareness. They’re the ones whose authentic positioning aligns with how AI systems evaluate credibility.

The GEO Analysis: Four Visibility Signals That AI Platforms Prioritize

Through analyzing the responses, we identified four specific signals that appear to influence how confidently AI platforms recommend brands:

Signal 1: Community Sentiment as Citation Source

What We Observed:

AI platforms frequently cited community discussions—particularly Reddit—when explaining their recommendations. The sentiment and consistency of these community discussions appeared to influence recommendation confidence.

For brands where community discussions showed mixed sentiment or varying experiences, AI platforms used more qualified language in their recommendations.

Why This Signal Matters:

AI treats community platforms as trusted sources for real-world product experiences. When community sentiment aligns consistently in one direction, AI can recommend with more confidence. When sentiment is mixed or contradictory, AI tends toward more cautious language.

The Contrast in This Case:

Community discussions about Minimalist tended to align with the brand’s positioning (science-focused, ingredient-transparent). Community discussions about Mamaearth showed more varied perspectives, which AI platforms reflected in their more qualified responses.

How AI Platforms Explain Their Sources

- Google Gemini

The response showed clear patterns:

- The Consensus on Minimalist : “Overwhelmingly positive for being a budget-friendly alternative to high-end clinical brands”

- The Consensus on Mamaearth : “Mixed feedback regarding ‘marketing vs. results’ and issues with fragrance sensitivity”

2. Chatgpt

“The ‘mixed reviews’ comment about Mamaearth mainly comes from:

- Users reporting fragrance sensitivity or breakouts,

- Inconsistent experiences across different product lines.

Note: These are AI-generated observations based on community data available to the platform, not OddScrew’s assessment

3. Perplexity

Perplexity displays source citations directly in its interface through a dedicated “Links” tab. When searching for “Mamaearth skincare for beginners India,” the platform showed multiple sources including:

- Mamaearth’s official site,

- YouTube reviews, and

- product pages—making its sourcing transparent by default without requiring follow-up questions.

Signal 2: Brand Claim vs. Independent Source Consistency

What We Observed:

AI platforms cross-reference brand claims with independent sources. When brand messaging and independent sources align closely, AI recommendations tend to be more confident and specific.

In this case, Minimalist’s positioning (“science-backed,” “ingredient-transparent”) was echoed consistently across independent sources including ingredient analysis platforms and dermatology publications.

For Mamaearth, the brand’s positioning around “natural” and “toxin-free” wasn’t always described in the same terms by independent sources, which appeared to contribute to more qualified AI language.

Why This Signal Matters:

AI prioritizes corroboration. Brands described consistently across multiple independent sources earn more confident recommendations—regardless of the strength of the brand’s own claims.

The Key Insight: How a brand is described by third parties matters as much as (or more than) how it describes itself.

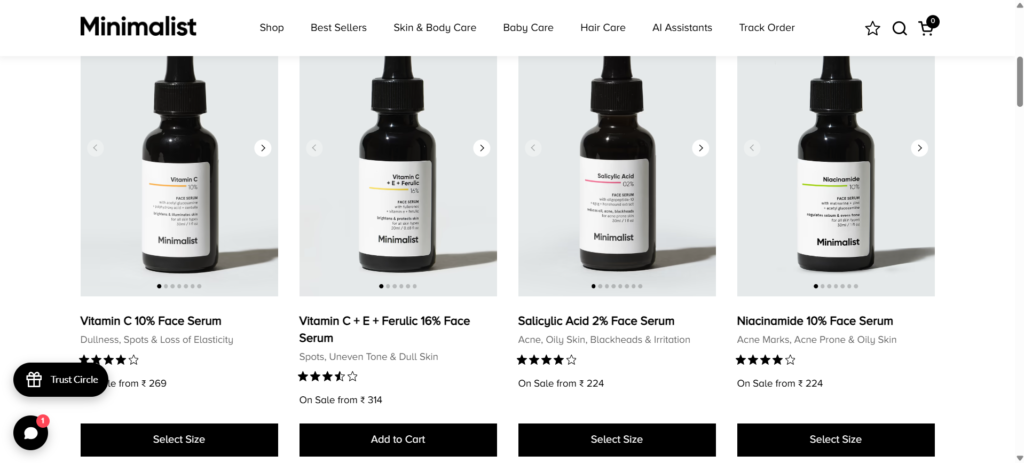

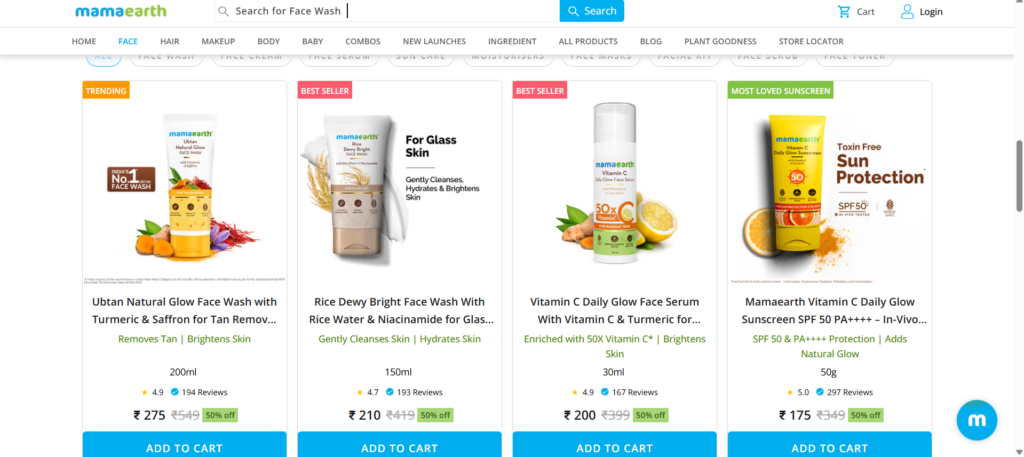

Signal 3: Content Extractability

What We Observed: AI platforms showed a preference for content that could be extracted and cited precisely. Product names, ingredient lists, and descriptions that stated specific, verifiable information appeared more frequently in recommendations.

The extractability gap:

For Minimalist, the AI can confidently state: “Minimalist offers a 10% niacinamide serum with 1% zinc.”

For Mamaearth, the AI has to qualify: “Mamaearth has products with niacinamide” (less specific) or dive into ingredient lists that may vary by market or formulation.

| Minimalist: Scientific Naming | Mamaearth: Benefit-Focused Naming |

|---|---|

|  |

| Ingredient names with exact percentages • Vitamin C 10% Face Serum • Salicylic Acid 2% Face Serum • Niacinamide 10% Face Serum • Vitamin C + E + Ferulic 16% Face Serum • AHA PHA BHA 32% Face Peel | Natural ingredients with benefit descriptions • Ubtan Natural Glow Face Wash with Turmeric & Saffron for Tan Removal • Rice Dewy Bright Face Wash With Rice Water & Niacinamide for Glass Skin • Vitamin C Daily Glow Face Serum With Vitamin C & Turmeric • Vitamin C Daily Glow Sunscreen SPF 50 PA ++++ |

| AI Extractability: Direct percentage extraction Specific, citable claims No interpretation needed | AI Extractability: Requires parsing ingredient lists Benefits, not concentrations Interpretation required |

Why This Signal Matters: AI rewards content that can be cited precisely without interpretation. The easier it is for AI to extract specific, verifiable claims, the more confidently it can include those claims in recommendations.

The Pattern: Content built for emotional resonance may trade off content built for AI extraction. Both serve important purposes—but they optimize for different outcomes.

Signal 4: Entity Description Consistency

What We Observed:

AI platforms appeared to prioritize brands with consistent descriptions across multiple sources—from business directories to industry publications to community discussions.

Minimalist is described similarly across Crunchbase, international skincare publications, and ingredient analysis platforms: science-backed, ingredient-transparent, accessible actives. The entity picture AI encounters is remarkably consistent.

Mamaearth’s description varies more across sources—from “leading natural beauty brand” in some sources to discussions about marketing approaches and community perspectives in others. This is natural for a larger, more visible company with broader media coverage—but the variation in descriptions may contribute to more qualified AI language.

Why This Signal Matters:

When AI cross-references multiple sources and finds consistent descriptions, it can synthesize that information into confident recommendations. When descriptions vary, AI tends to reflect that variation with more cautious language.

The Key Insight: It’s not about having less coverage—it’s about having more consistent coverage. Brands with broader visibility but less consistent entity descriptions face a different challenge than brands with narrower but more aligned coverage.

The Business Impact: What Strong GEO Signals Create

According to Adobe Analytics (2025 Holiday Recap), AI-referred traffic converts 31% better than every other source.

As AI platforms become a primary discovery channel for purchase decisions, the language they use when describing brands—confident vs. qualified, specific vs. vague, recommended vs. acknowledged—directly shapes how potential customers perceive and approach those brands before they ever visit a website.

The four signals create measurable business outcomes:

- Entity consistency determines recommendation confidence Brands described the same way across Wikipedia, business directories, review platforms, and industry publications earn confident AI recommendations. Inconsistent descriptions across sources lead to qualified, hedging language that undermines purchase intent.

- Extractable content becomes citable facts Product information structured for AI extraction—specific percentages, clear ingredient names, measurable claims—can be cited confidently. Marketing language that prioritizes emotion over precision requires interpretation, reducing citation confidence.

- Third-party validation builds authority Independent corroboration from dermatologists, ingredient databases, industry publications, and review platforms creates recommendation confidence. Self-made claims without external validation receive cautious treatment.

- Community alignment shapes perception When community discussions validate brand positioning, AI can synthesize both sources into confident recommendations. Gaps between brand claims and community sentiment lead to qualified language that reflects that disconnect.

These signals compound over time: early investment establishes citation authority that reinforces future recommendations, creating advantages that build with each AI interaction.

The Result: A brand that AI can describe, recommend, and cite with confidence—across every platform, for every relevant query in its category.

Frequently Asked Questions

This case study examines AI recommendation patterns—specifically, which signals influence how confidently AI platforms recommend brands. We’re analyzing the mechanics of AI search behavior, not evaluating product quality or business success.

Yes. Between 40% and 60% of sources cited by AI change month to month (AirOps, 2026). These results reflect the state at publication. As brands adjust their signals—entity consistency, content structure, third-party presence, community engagement—AI recommendations will reflect those changes. That’s the opportunity: AI visibility is buildable and improvable.

Completely. The same signals influence AI recommendations in B2B categories. The platforms may differ (LinkedIn vs. Reddit, for example), but the pattern is the same.

Run the experiment yourself. Ask AI platforms the questions your potential customers ask when choosing a business in your category. Then ask directly about your brand. The language AI uses—confident and specific vs. qualified and cautious—reveals your current signal strength.

Start with entity consistency. Ensure your brand is described the same way across your website, LinkedIn, business directories, and third-party sources. This is the most foundational signal—and addressing inconsistencies here often has the fastest impact on AI recommendation confidence.

Lessons for D2C Brands

This case study reveals four principles that extend beyond skincare to any brand competing for AI visibility:

I. Signal consistency compounds over time

The first brand to establish consistent signals across all four dimensions builds citation authority that becomes increasingly difficult to displace. AI platforms develop confidence in sources they’ve cited successfully before. Early movers in AI optimization gain compounding advantages similar to early SEO adopters in the 2000s.

II. Community alignment matters more than community size

A small, aligned community in the right forums carries more weight than massive but varied social media followings. Ten Reddit threads in r/IndianSkincareAddicts matter more than 100,000 Instagram followers when AI platforms are forming recommendations. The community needs to validate your positioning, not just mention your brand.

III. Content structure beats content volume

One product page with extractable facts (percentages, specific claims, clear categorization) outweighs ten pages of benefit-focused marketing copy. AI platforms can’t cite what they can’t parse. The format of your information matters as much as the information itself.

IV. Marketing narrative versus technical facts creates strategic tension

Brands face a choice: optimize for emotional resonance (benefit language, lifestyle positioning, aspirational messaging) or optimize for AI extractability (scientific naming, specific claims, factual structure). The most successful approach may be bifurcation—emotional marketing in ads and social, technical precision in product pages and third-party content.

Unlike traditional media advantages that require massive budgets, the four visibility signals are buildable through strategic content decisions. Entity consistency, community engagement, extractable formatting, and third-party validation are accessible to brands at any scale. The question is whether brands recognize the shift before competitors establish unassailable citation authority.

Next Steps: What You Can Do About It

Quick Win: Run Your Own Audit

Open ChatGPT and type:

- What is [your brand name]?

- The five most common questions your potential customers ask about your category

Read what comes back. Note whether AI hedges, qualifies, or recommends with confidence.

For the full methodology, see our guide -> How to Run a GEO Visibility Audit

Want Expert Help?

A professional GEO visibility audit identifies exactly which signals are working, which are conflicting, and what it takes to build consistent AI recommendations.

At OddScrew, we map your complete Citation Gap—which queries you’re missing from, which competitors are being recommended instead, and exactly what it takes to close the gap.

Book your complimentary GEO audit →

About This Case Study Series

This is the first entry in OddScrew’s Case Study series—a running documentation of real AI search experiments across different brands and categories. Every finding is based on live searches, documented at the time of publication.

The purpose of this series is educational: to help businesses understand the specific signals that influence AI recommendations. We examine publicly observable patterns—the kind of recommendations any person can see by running the same searches.

No brand featured in this series is being evaluated for product quality or business merit. The focus is always the same: understanding how AI search engines build their picture of a brand, and what that reveals about the signals that drive AI recommendations.